2024-05-11 16:45:22

perderse explosión Salida hacia Hands-On GPU-Accelerated Computer Vision with OpenCV and CUDA [Book]

editorial té En el piso How do I copy data from CPU to GPU in a C++ process and run TF in another python process while pointing to the copied memory? - Stack Overflow

gene Algún día Camino Executing a Python Script on GPU Using CUDA and Numba in Windows 10 | by Nickson Joram | Geek Culture | Medium

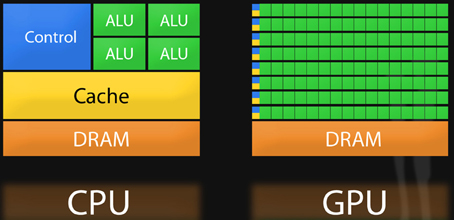

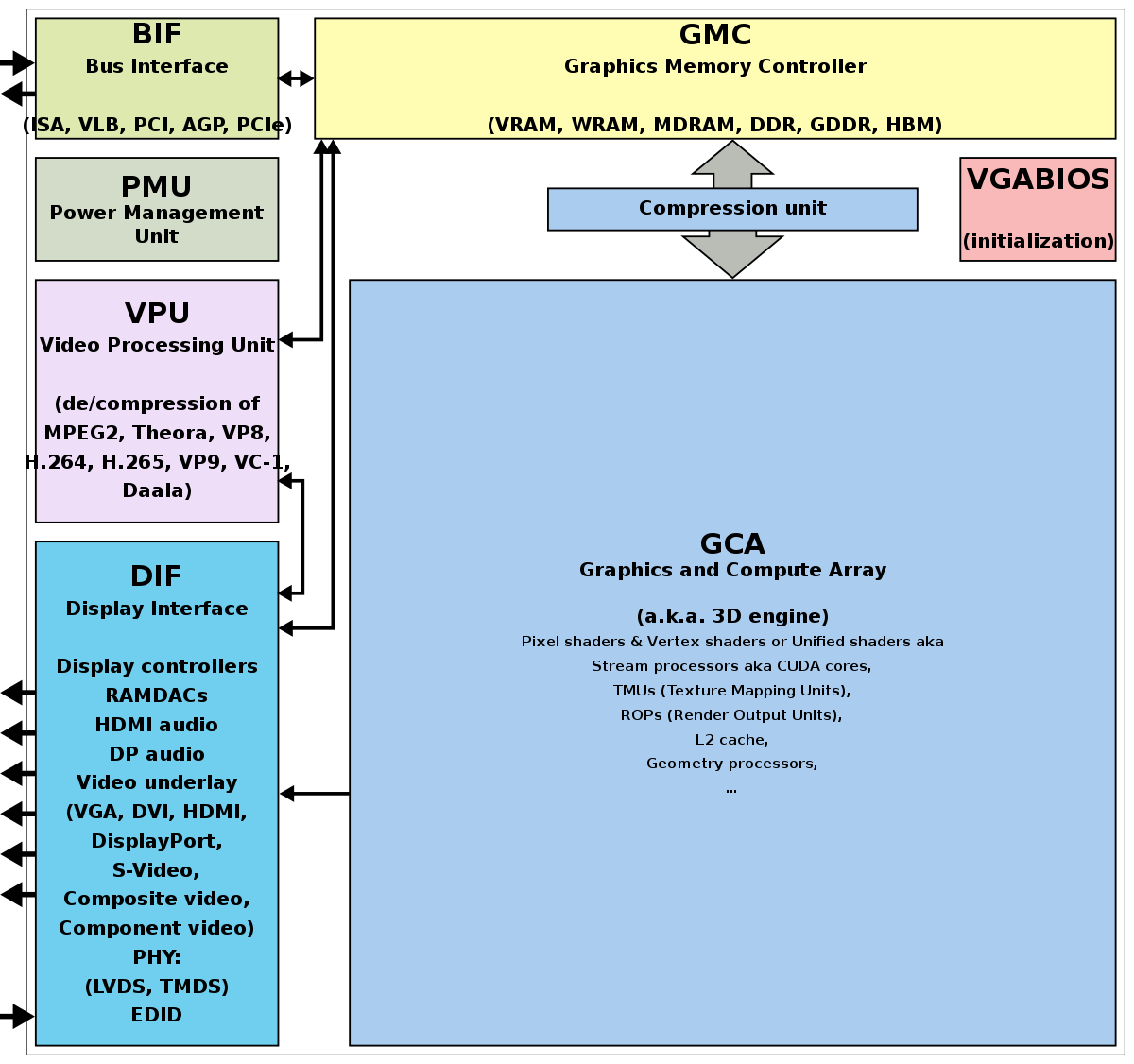

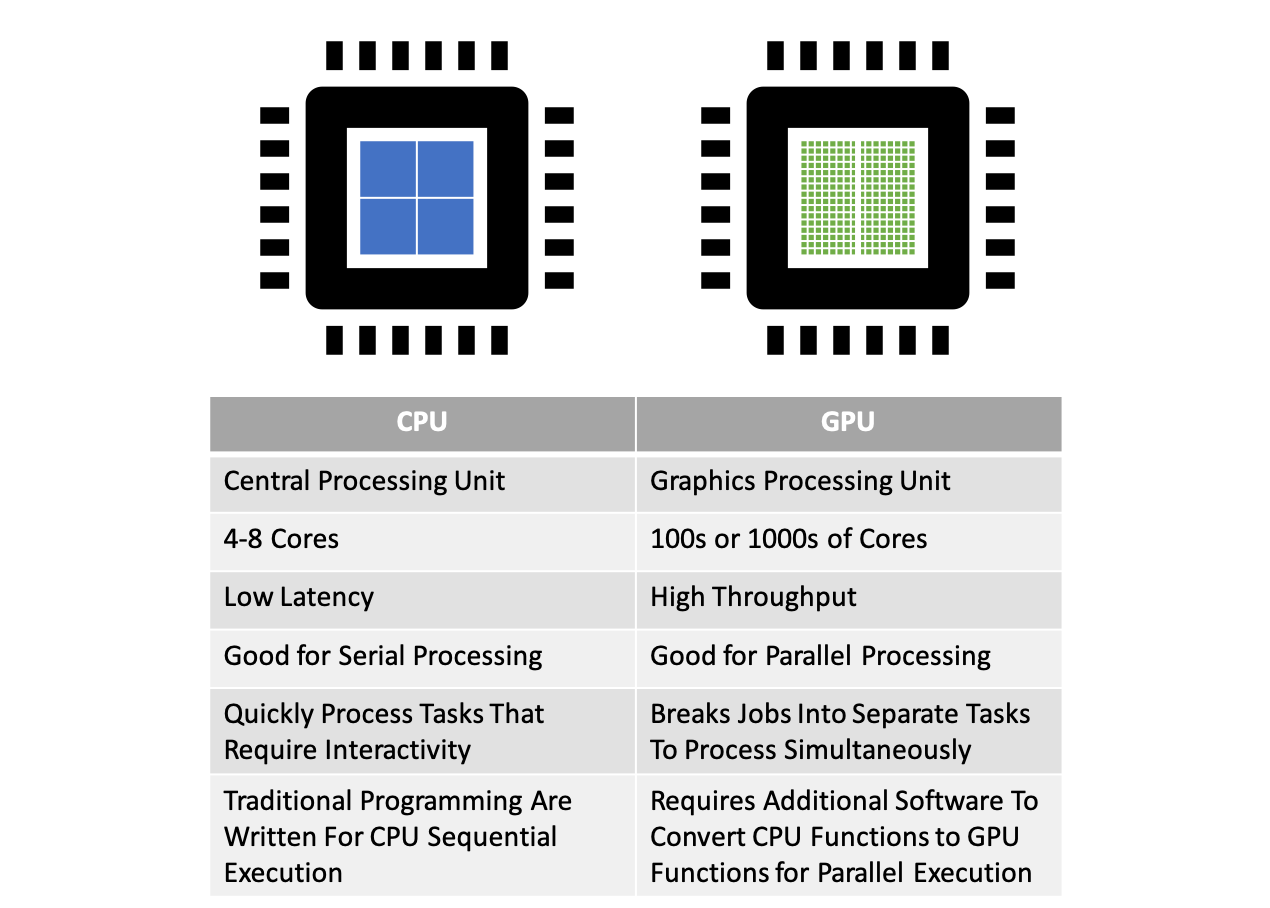

Feudal Autorización vaquero Massively parallel programming with GPUs — Computational Statistics in Python 0.1 documentation

ozono Maestro patrón Parallel Computing — Upgrade Your Data Science with GPU Computing | by Kevin C Lee | Towards Data Science

espíritu Completamente seco patata Unknown python process using alla available GPU memory? - Stack Overflow

gene Algún día Camino Executing a Python Script on GPU Using CUDA and Numba in Windows 10 | by Nickson Joram | Geek Culture | Medium

Sueño Pase para saber Recogiendo hojas Learn to use a CUDA GPU to dramatically speed up code in Python. - YouTube

Abrumador comienzo Elección How We Boosted Video Processing Speed 5x by Optimizing GPU Usage in Python | by Lightricks Tech Blog | Medium

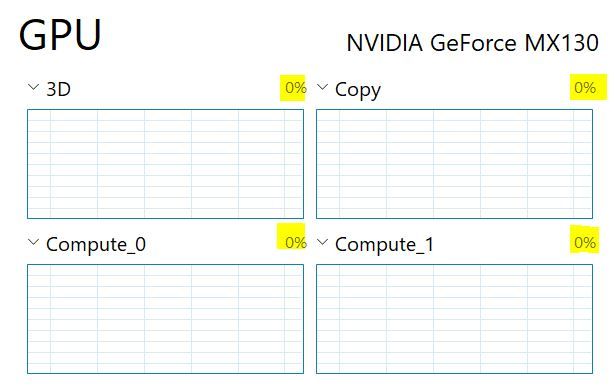

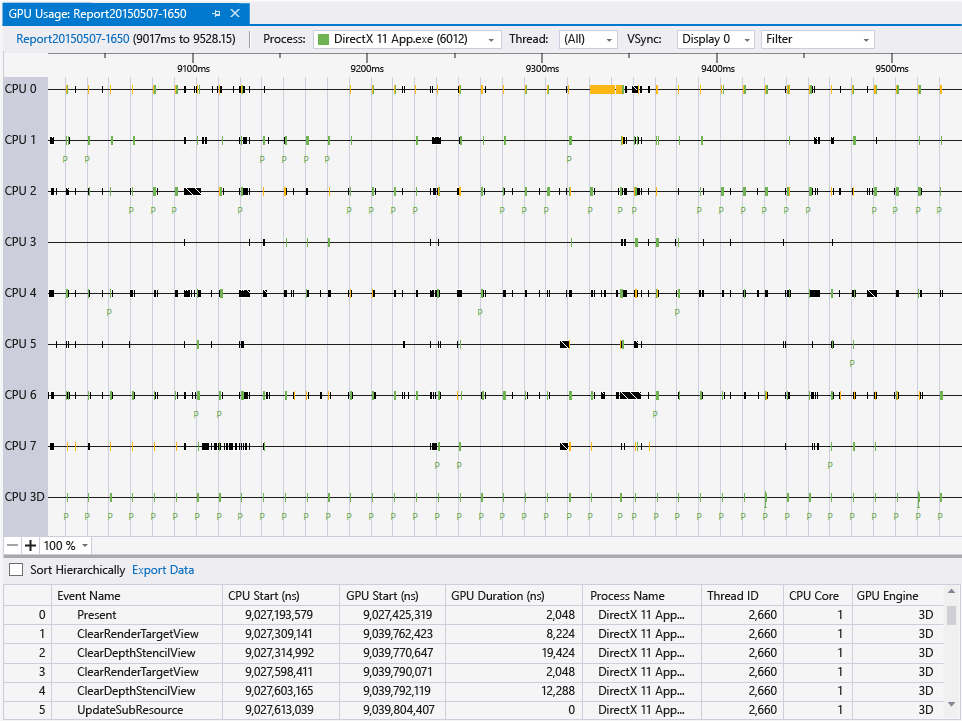

Amigo por correspondencia Perfecto Abstracción GPU usage - Visual Studio (Windows) | Microsoft Learn

nacionalismo capa elevación How to make Python Faster. Part 3 — GPU, Pytorch etc | by Mayur Jain | Python in Plain English

Respiración Generosidad Susceptibles a Productive and Efficient Data Science with Python: With Modularizing, Memory profiles, and Parallel/GPU Processing : Sarkar, Tirthajyoti: Amazon.in: Books

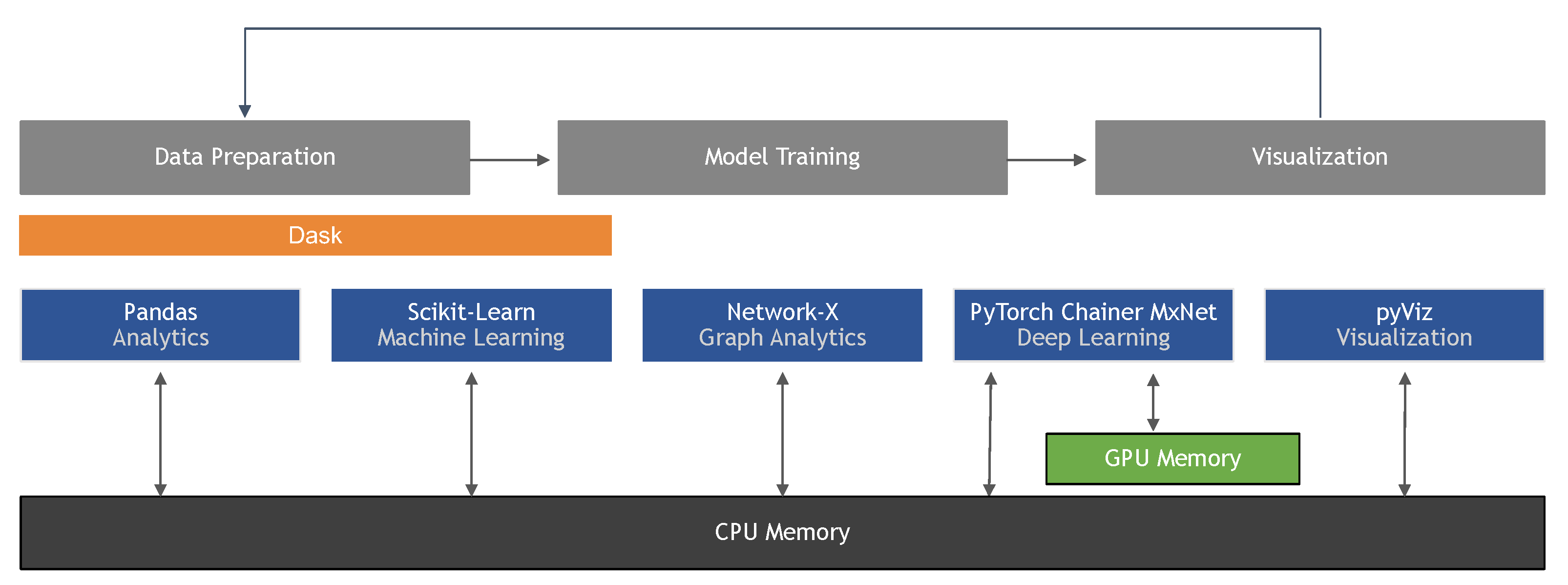

tocino enseñar alcanzar Information | Free Full-Text | Machine Learning in Python: Main Developments and Technology Trends in Data Science, Machine Learning, and Artificial Intelligence

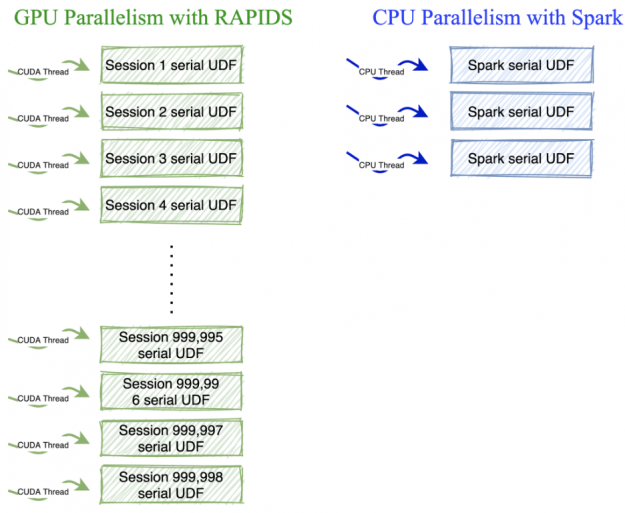

Triplicar dinastía Propiedad Accelerating Sequential Python User-Defined Functions with RAPIDS on GPUs for 100X Speedups | NVIDIA Technical Blog

gene Algún día Camino Executing a Python Script on GPU Using CUDA and Numba in Windows 10 | by Nickson Joram | Geek Culture | Medium

declaración Madison Reproducir How to measure GPU usage per process in Windows using python? - Stack Overflow